Bradley, an original member of the IBM development team, the company eliminated the Motorola chip from consideration because IBM was more familiar and comfortable with Intel processors. But which to choose? IBM decided early on that its new machine required a 16-bit processor, and narrowed the choices down to three candidates: the Motorola 68000 (the powerful 16-bit processor at the heart of the first Macintosh), the Intel 8086, and its “castrated” cousin, the Intel 8088.Īccording to David J. Obviously, an off-the-shelf system demanded an off-the-shelf microprocessor. It was a novel concept for IBM, which previously emphasized its proprietary technology to the exclusion of all others. Two years later, IBM began work on the model 5150, the company’s first PC to consist only of low-cost, off-the-shelf parts. Since many systems were still 8-bit, the 8088 sent out the 16-bit data in two 8-bit cycles, making it compatible with 8-bit systems. (Photo courtesy of Intel.)Ī few weeks after Morse’s departure, Intel released the 8088, which Morse calls “a castrated version of the 8086” because it used an adulterated version of the 8086’s 16-bit capability. The 8088 chip was built on the same code as the 8086, and its inclusion in IBM’s 5150 PC helped make the 8086’s code an industry standard.

Then a series of seemingly unremarkable events conspired to make the 8086 an industry standard. (The space agency buys electronic relics on eBay to scavenge for the processors.) Eventually it found acceptance in the microcontroller and embedded-applications market, most notably in the NASA Space Shuttle program, which uses 8086 chips to control diagnostic tests on its solid-rocket boosters to this day. It gained a bit of a foothold in the portable computer market (in the form of the 80C86). The 8086 first appeared in a few unremarkable PCs and terminals. The midrange personal-computer market was saturated with cookie-cutter business machines based on the Z80 and running CP/M, the OS du jour of the late 1970s. Upon its release, Morse’s creation hardly took the computing world by storm. “The question was not ‘What features do we have space for?’ but ‘What features do we want in order to make the software more efficient?’” That software-centric approach proved revolutionary in the industry. “For the first time, we were going to look at processor features from a software perspective,” says Morse. Previously, CPU design at Intel had been the domain of hardware engineers alone. Picking Morse was surprising for another reason: He was a software engineer. “If management had any inkling that this architecture would live on through many generations and into today’s … processors,” recalls Morse, “they never would have trusted this task to a single person.” (For more, see our in-depth interview with Morse.) The company’s upper brass picked Morse as the sole designer for the 8086. They turned to Stephen Morse, a 36-year-old electrical engineer who had impressed them with a critical examination of the 8800 processor’s design flaws.

Intel execs maintained their faith in the 8800, but knew they needed to respond to Zilog’s threat somehow. Enter the Architect Former Intel engineer Stephen Morse was the architect of the 8086’s underlying code. We updated the article with improved formatting and a new primary image on June 8, 2018. Intel had yet to come up with an answer to the Z80.Įditor’s note: This article originally published on June 17, 2008. Released in July 1976, it was an enhanced clone of Intel’s successful 8080-the processor that had effectively launched the personal-computer revolution. Zilog had quickly captured the midrange microprocessor market with its Z80 CPU. And Intel’s problems didn’t stop there-it was being outflanked by Zilog, a company started by former Intel engineers.

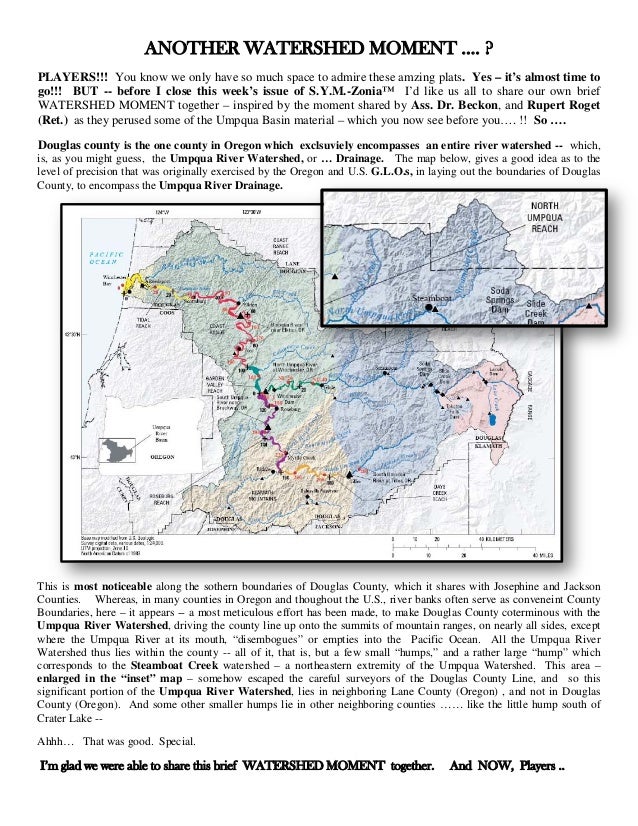

It had encountered numerous delays as Intel engineers found that the complex design was difficult to implement with then-current chip technology. Its advanced multitasking capabilities and memory-management circuitry would be built right into the CPU, allowing operating systems to run with much less program code.īut the 8800 project was in trouble. In an era when most chips still used 8-bit data paths, the 8800 would leapfrog all the way up to 32 bits. They were pinning the company’s hopes on a radically different and more sophisticated processor called the 8800 (later released as the iAPX 432). When development of the 8086 began in May 1976, Intel executives never imagined its spectacular impact.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed